Documentation Index

Fetch the complete documentation index at: https://docs.cnap.tech/llms.txt

Use this file to discover all available pages before exploring further.

This guide applies to managed clusters only. For imported clusters, worker management is handled by your cloud provider or yourself, depending on your cluster type.

Prerequisites

- An active managed cluster (status shows “Active” in the dashboard)

- A server or machine to connect (see supported operating systems below)

- Server meets minimum requirements: 2 CPU, 4GB RAM (4 CPU, 8GB RAM recommended for production)

How to Add Workers

Get Worker Setup Command

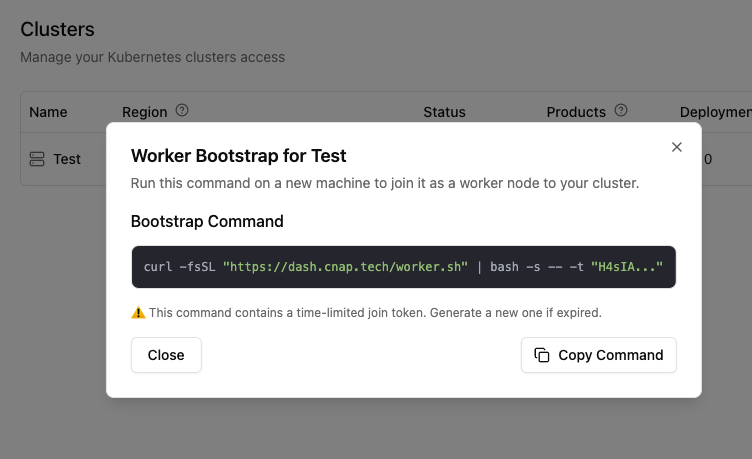

In your clusters dashboard, click on your cluster and find the “Quick Actions” section. Click “Add a worker” and copy the bootstrap command.The command looks like:Join token details: The token expires 30 minutes after generation and can be used multiple times to join multiple machines.

Bootstrap command

Connect and Run Setup Command

SSH into your server:Then execute the bootstrap command you copied from the dashboard. The script automatically installs Kubernetes components, joins the worker to your cluster, and configures networking and security.

SSH to server

Automated Provisioning with Cloud-init

For fully automated worker provisioning, use cloud-init when creating servers on Hetzner, AWS, DigitalOcean, or any cloud provider. The server will automatically join your cluster on first boot - no SSH required.Copy Cloud-init Config

In your cluster dashboard, click “Add a worker” and switch to the “Cloud-init” tab. Copy the configuration:

Cloud-init config

Create Server with Cloud-init

When creating a new server in your cloud provider’s dashboard:

- Hetzner: Paste into “Cloud config” under “Additional features”

- AWS EC2: Paste into “User data” under “Advanced details”

- DigitalOcean: Paste into “User data” under “Advanced options”

- Vultr: Paste into “Cloud-Init User-Data”

- GCP: Paste into “Automation > Startup script”

Cloud-init’s

runcmd executes once on first boot only. If you need to re-run the bootstrap (e.g., after a reset), you’ll need to use the manual command method or recreate the server.Supported Operating Systems

CNAP workers support a wide range of Linux distributions and Windows Server:- Debian: 11 (Bullseye), 12 (Bookworm)

- Ubuntu: 22.04 LTS or later

- Red Hat Enterprise Linux: 7.9, 8.10, 9.5

- CentOS Stream: 9, 10

- Oracle Linux Server: 8.9, 9.3

- Amazon Linux: 2023

- Fedora: 41 (Cloud Edition)

- Fedora CoreOS: Stable stream

- Alpine Linux: 3.19, 3.22

- Flatcar Container Linux

- Windows Server: 2019 (experimental support)

What’s Next?

Once workers are connected, you can:Deploy Products Yourself

Trigger deploying your product from the dashboard to your own workspace

Package Your Software

Turn your applications into sellable products

Add More Capacity

Scale your infrastructure as demand grows

Learn About Clusters

Understand how clusters work in CNAP

Troubleshooting

Worker not appearing in dashboard

Worker not appearing in dashboard

- Verify the server has internet connectivity

- Check that the setup command was copied correctly

- Ensure you’re running as root (with

sudo) - Wait 2-3 minutes for the registration process

Setup command fails

Setup command fails

- Check server meets minimum requirements (2 CPU, 4GB RAM)

- Ensure you’re using a supported Linux distribution (see supported operating systems)

- Verify no conflicting Docker/Kubernetes installations

- Verify server has outbound networking access to the control plane (outbound HTTPS connections are allowed by default, but check if behind a firewall)

Worker behind firewall or NAT?

Worker behind firewall or NAT?

CNAP’s managed KaaS architecture is designed so workers don’t need public IP addresses. Workers automatically create outbound connectivity tunnels to the control plane, allowing the control plane to reach the kubelet securely. This works behind firewalls, NAT, and restrictive networks.